Imagine a fake video of a popular actor endorsing a politician goes viral the night before an election. It's a deepfake, completely fabricated, and spreading fast.

What happens next depends entirely on where you live.

In India, the platform hosting that video has about three hours to take it down. Miss the window, and they lose their legal protection. The law doesn't care how the fake was made or where it came from. It cares that it's still up, still spreading, still doing damage.

In the European Union (EU), regulators, if they were to enforce, would rather go after the AI system that created the fake in the first place, long before it ever had a chance to go viral.

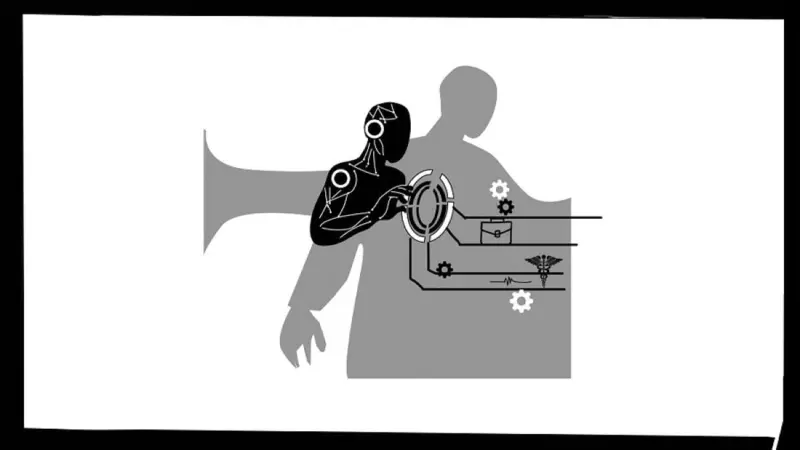

The thinking there is that if you build the machine safely, the mess is less likely to happen at all. This difference reveals the contrasting ways major democracies are approaching AI regulation. The EU is regulating the machine. India is regulating the mess it leaves behind.

For instance, the EU has passed a sweeping AI act that sorts these systems into risk categories, and the ones higher on this list face more severe regulations than the ones below. Countries such as Belgium are incorporating these safety features into the products before they are even shipped by way of regulation.

Deployment of AI in sensitive domains like healthcare, hiring, or credit requires thorough preparation. Despite the implementation of these regulations in the EU, the claims of their having hindered innovation, as per the Daggi Report, have led to a pause in enforcing the Act for the next 12 months.

Neither India nor the UK has adopted an EU-inspired AI statute, trying to cope with the situation by stitching together older rules, regulations, data protection frameworks, and so on. For India, there's a set of AI governance guidelines that lay out seven broad principles, things like "Innovation over Restraint" and "Trust is the Foundation."

These are, however, suggestions, not enforceable rules. The UK, in contrast, has asked sectoral regulators in health, finance, and beyond to adapt existing rules where they can so that they can regulate AI where it matters most, while keeping things flexible as technology evolves.

Thus, when AI-generated intimate images started spreading on X without consent, UK authorities applied existing laws criminalising nonconsensual sharing of intimate pictures, forcing X to restrict the content. It worked, and it was fast.

As AI leaders, India and the UK aren't ignoring regulation. They're just making a different bet. And you can see why. For India, with a 1.4-billion population, AI could be genuinely transformative.

Getting crop advice to a farmer in Bihar in his own language, or helping a nurse in a rural clinic triage patients faster - and these things matter. Worrying about whether some foundation model poses a "systemic risk" feels like tomorrow's problem.

Deepfake challenge | India needs provenance, not just platform moderationBoth these countries have closely kept an eye on the EU, where experts and even Big Tech companies are struggling with these rules. Apple and Meta held back AI products in Europe to avoid compliance issues, leaving citizens without access.

The idea, therefore, is to find a middle ground: stricter than the US's largely hands-off policy but lighter than the EU's over-precautionary approach, allowing companies to innovate while still being accountable.

Impacting life outcomes

Here's where things get tough. India has taken an aggressive stance on AI-generated deepfakes or misinformation. Platforms are expected to take down flagged material within two to three hours, failing which, they lose their safe-harbour protection and become legally responsible for everything on their servers.

That's an enormous liability, and under such pressure, companies rarely investigate; they simply delete the content. The UK balances regulation better, but as Elon Musk discovered, AI-generated content faces more rules than the AI systems themselves.

Deepfake Alert: How to detect AI-generated images and videos using Gemini AI appThe real problem is that neither country has binding oversight of platforms that control real lives, like loan-screening algorithms or systems that suppress women's voices on LinkedIn.

A comedian's satire can vanish in hours, yet opaque scoring systems determining someone's financial future can operate unchecked for years. These priorities seem backwards when algorithms shape life outcomes with zero accountability while surface-level content gets immediate attention.

What does this mean for people on the ground? India has become a high-stress but high-reward place to operate for global companies. Compliance teams have to run nearly real-time takedown systems, label AI features, and tune their filters to match Indian legal standards.

One quiet response to all this pressure is what some are calling the "silent switch-off," where platforms are simply disabling their more advanced AI features rather than risk the liability, protecting their interests but leaving consumers with 'weaker' products.

Indian startups feel this strain all the more, struggling to build expensive compliance systems, user logs, takedown workflows, and traceability tools that large companies can absorb, but a ten-person team running on seed funding cannot.

The methods adopted by the UK and India are not necessarily wrong. But when AI systems become embedded in courts, welfare systems, and banks, the current model may not hold.

The same governments that want AI to power Viksit Bharat and the AI Opportunities Action Plan in the UK will eventually have to reckon with AI systems making decisions in critical domains, and principles in a guidance document won't be enough when something goes seriously wrong.

The question is whether light-touch regulation can actually work. Open tools and shared standards, like the New Delhi Declaration and the UK's Inspect framework, offer a practical middle ground.

But here's the harder truth: deleting deepfakes quickly is easy compared to finding who's responsible when algorithms silently ruin lives. That accountability gap is the real challenge we must solve now.

Kanadpriya is a business professor at Costello School of Business, George Mason University, the US; Amanda is CEO, OpenUK and OpenHQ, the UK. Moumita Mukherjee, communication strategist, The Institute of Breast Diseases, Kolkata, contributed to this article.

(Disclaimer: The views expressed above are the author's own. They do not necessarily reflect the views of DH.)