AI search engine Perplexity founder and CEO Aravind Srinivas believes India is on the wrong path when it comes to building AI models.

Taking to X, Srinivas said, "Re India training its foundation models debate: I feel like India fell into the same trap I did while running Perplexity."

Notably, Perplexity AI uses almost all of the major foundational models to provide real-time answers to users' queries on the platform.

Srinivas believes that instead of finetuning a foundational model, Indian companies should focus on training their models from scratch.

He said that while the thinking models are going to be costly to train, India "must show the world that it’s capable of ISRO-like feet (sic) for AI".

"I think that’s possible for AI (to train models frugally), given the recent achievements of DeepSeek. So, I hope India changes its stance from wanting to reuse models from open-source and instead trying to build muscle to train their models that are not just good for Indic languages but are globally competitive on all benchmarks," he added.

DeepSeek is a China-based AI company that develops large language models (LLM). On (January 20), the company launched its latest reasoning models, DeepSeek-R1 and DeepSeek-R1-Zero, to take on platforms like OpenAI-o1.

DeepSeek, which has raised a little over $4 Mn, is being touted as a competitor to OpenAI.

"I’m not in a position to run a DeepSeek-like company for India, but I’m happy to help anyone obsessed enough to do it and open-source the models," said Srinivas.

Currently, majority of the Indian AI companies are pushing for a product finetuned on open sourced foundational models. However, few of the notable exceptions include Ola's Krutim AI and the Indian-government backed BharatGen.

It is pertinent to note that Infosys cofounder Nandan Nilekani, in October last year, said that Indians shouldn't be focusing on building foundational models from scratch. Instead, they should create synthetic data and smaller models quickly.

"We will use it (open-source foundational models) to create synthetic data, build small language models quickly, and train them using appropriate data," he said at the time.

One of the major AI companies in India probably following his advice is Sarvam AI. The Lightspeed-backed startup is focusing on training its AI models using synthetic data.

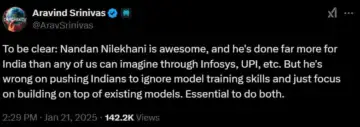

However, Srinivas doesn't agree with Nilekani. "… He’s (Nilekani) wrong in pushing Indians to ignore model training skills and just focus on building on top of existing models. (It is) essential to do both," Srinivas wrote in a separate post.

Industry veterans like Google India research director Manish Gupta also shared similar opinions previously, saying that India will benefit from building foundational models.

Notably, Srinivas met Prime Minister Narendra Modi last month. After the meeting, he said, "…we had a great conversation about the potential for AI adoption in India and across the world."

Should India Build Or Train?

While Srinivas' thoughts come from an experience from being active in the US market, investors and founders active in India seem to have a different view on the subject.

Ankush Sabharwal, founder and CEO of conversational AI platform CoRover.ai, told Inc42 that investors in India have a different thought process. "For Indians, it makes more sense to finetune an open source model and build a solution to a problem that brings revenue… People in the US build it because they can afford to build such models and not expect revenue in the near future," he added.

Echoing this sentiment, VC firm Inflexor Ventures partner Murali Krishna Gunturu said that there is value in building applied AI models on top of existing LLMs to solve a real life problem.

"We strongly believe that billion-dollar businesses can be built in these spaces and building these businesses also require strong skill sets of understanding existing LLMs, understanding customer requirements and building a scalable solution on top of these foundational models that actually meet the customer needs," Gunturu said.

Meanwhile, Apurv Agarwal, CEO of AI-driven telecalling platform SquadStack, said that India's strength is different from the US. "The global AI race isn't just about power-it's about combining strong foundations with innovative tools. India's strength lies in creating systems where these elements work together."

Notably, most Indian startups have built solutions on top of the existing foundational models. Building a foundational model from scratch requires a completely different approach.

"When contemplating whether to develop foundational models, the primary factors influencing the choices of founders are matters like access to computing and hardware resources, specialised domain expertise, and groundbreaking insights that can enable them to compete with companies that have already raised substantial amounts of capital," said Pranav Pai, founding partner of 3one4 Capital.

It is pertinent to highlight that OpenAI has raised more than $17 Bn since its inception about 9 years ago.

Nevertheless, it is also the years of experience that seem to help companies gain edge in the AI race, and not just big-ticket fundraises. For instance, while DeepSeek has raised only a small amount of capital till date, it was founded by Liang Wenfeng, who also helmed High-Flyer Quant - one of the largest AI-backed hedge funds in China.

Till 2019, High-Flyer Quant used to manage about $1.4 Bn in assets, as per a report by South China Morning Post.

"In our view, this topic has a lot more nuance, wherein we should take into account the time, effort and resources involved in training and building a foundational model and our ambitions of being at the cutting edge and competing with countries like the US/China," said Gunturu.