Introduction: AI Ambition Requires Infrastructure Maturity

For Indian businesses, artificial intelligence is no longer an experimental frontier.

Adoption is spreading across industries, from conversational AI in customer service to computer vision in manufacturing and predictive analytics in financial services. Industry estimates indicate that digital transformation initiatives and data-driven decision-making will propel India's AI market to grow at a compound annual growth rate (CAGR) of over 20% in the upcoming years.

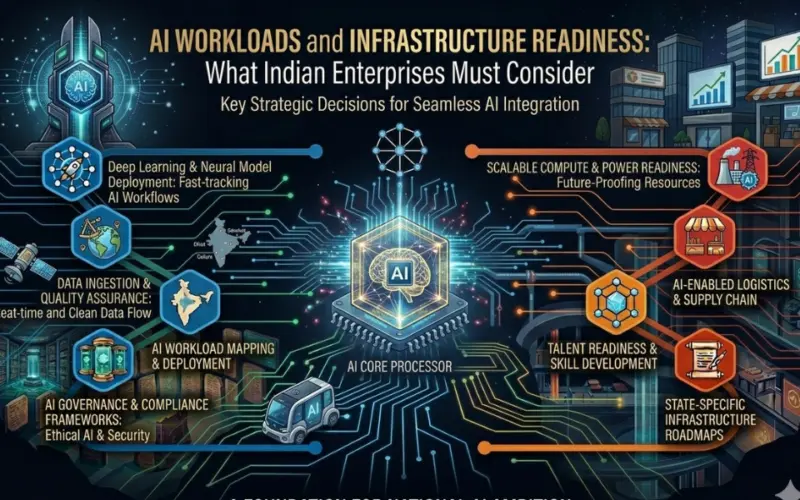

However, deploying AI at scale is fundamentally different from running traditional enterprise applications. AI workloads demand high-performance computing, scalable storage, and continuous optimization. Infrastructure readiness, therefore, becomes a strategic prerequisite rather than a backend operational concern.

The Expanding AI Landscape in India

Indian enterprises are integrating AI across multiple domains:

- Fraud detection in fintech

- Demand forecasting in retail

- Diagnostics support in healthcare

- Intelligent automation in IT operations

Large amounts of both structured and unstructured data are produced and processed by these programs. While inference workloads require low latency and high availability, machine learning model training requires substantial computational resources.

To support experimentation phases, many organizations begin with flexible environments such as linux vps hosting, which provides cost-efficient virtualized infrastructure for development and testing. However, enterprise-grade AI adoption requires a broader infrastructure vision beyond initial deployment setups.

Infrastructure Challenges in Scaling AI Workloads

While AI pilots often succeed in controlled environments, scaling them across departments or customer bases introduces complexities.

1. Compute and GPU Requirements

AI model training involves parallel processing and high memory usage. Enterprises must assess:

- CPU vs. GPU workload distribution

- Memory bandwidth requirements

- Resource isolation across teams

Underestimating compute demand can lead to prolonged training cycles and performance bottlenecks.

2. Data Management Complexity

AI systems depend heavily on data pipelines. Challenges include:

- Managing distributed datasets

- Ensuring data quality and consistency

- Complying with data localization regulations

Without structured storage architecture and governance, model outputs may lack reliability.

3. Latency and Real-Time Processing

Applications such as fraud detection or recommendation engines require near real-time inference. Infrastructure must support:

- Low-latency networking

- Edge deployment where necessary

- Load balancing across inference endpoints

4. Cost Optimization vs. Performance

AI workloads can significantly increase cloud expenditure. While linux vps hosting may offer economical starting points, enterprises must continuously evaluate:

- Utilization rates

- Auto-scaling policies

- Idle resource management

Strategic planning prevents unexpected cost escalation during growth phases.

Best Practices for AI Infrastructure Readiness

Enterprises can strengthen AI deployment success through structured infrastructure strategies.

Build a Tiered Architecture Model

A multi-layered setup typically includes:

- Development and experimentation environments

- Dedicated training clusters

- Production-grade inference infrastructure

- Secure backup and disaster recovery systems

This separation enhances reliability and performance predictability.

Prioritize Containerization and Orchestration

Container technologies and orchestration platforms help:

- Standardize deployment environments

- Enable workload portability

- Simplify scaling during peak demand

This approach reduces dependency on rigid infrastructure models.

Implement Observability and Monitoring

AI systems require continuous monitoring of:

- Model performance metrics

- Resource utilization trends

- Data drift and anomaly detection

Proactive observability ensures consistent output quality and system health.

Embed Security and Governance Frameworks

AI systems must comply with regulatory standards and enterprise governance policies. Infrastructure planning should include:

- Role-based access control

- Encryption at rest and in transit

- Comprehensive audit logging

Security integration at the infrastructure level reduces long-term operational risk.

Strategic Impact on Innovation and Competitiveness

Infrastructure-ready enterprises gain multiple advantages:

- Faster AI experimentation cycles

- Reduced downtime during scale transitions

- Greater investor and stakeholder confidence

- Stronger global competitiveness

Infrastructure maturity will be crucial to business success as India positions itself as a global center for AI talent. Businesses are better able to turn AI pilots into long-term value creation when they make early investments in scalable, secure, and performance-driven systems.

Furthermore, distributed infrastructure adoption enables enterprises beyond metro cities to participate actively in AI innovation, contributing to a more inclusive digital economy.

Conclusion: Preparing for AI-Driven Growth

AI transformation is not solely about algorithms and data scientists. It is equally about the systems that power model training, deployment, and continuous optimization.

Indian enterprises must evaluate compute capacity, storage architecture, governance policies, and scalability frameworks before scaling AI initiatives. While environments such as linux vps hosting may support early experimentation, sustainable AI growth demands a comprehensive infrastructure roadmap.

By aligning technical architecture with long-term AI strategy, enterprises can ensure that innovation efforts translate into measurable business outcomes and ecosystem advancement.

artificial intelligence india Enterprise Infrastructure Linux VPS hosting Cloud Strategy digital transformation Data Governance

Disclaimer

This content is a community contribution. The views and data expressed are solely those of the author and do not reflect the official position or endorsement of nasscom.

That the contents of third-party articles/blogs published here on the website, and the interpretation of all information in the article/blogs such as data, maps, numbers, opinions etc. displayed in the article/blogs and views or the opinions expressed within the content are solely of the author's; and do not reflect the opinions and beliefs of NASSCOM or its affiliates in any manner. NASSCOM does not take any liability w.r.t. content in any manner and will not be liable in any manner whatsoever for any kind of liability arising out of any act, error or omission. The contents of third-party article/blogs published, are provided solely as convenience; and the presence of these articles/blogs should not, under any circumstances, be considered as an endorsement of the contents by NASSCOM in any manner; and if you chose to access these articles/blogs , you do so at your own risk.

Co-Founder and CMO

Technology thrives at the intersection of innovation and infrastructure. My dual role is my commitment to both: fueling innovation at my company and strengthening the industry's infrastructure through NASSCOM's council. Together, we're not just navigating change; we're laying down the tracks for progress.