The rapid acceleration of artificial intelligence has fundamentally changed how infrastructure is designed and deployed. Organizations are no longer building systems for traditional workloads-they are building AI-native environments that require massive parallel compute, high-bandwidth memory, and ultra-fast interconnects.

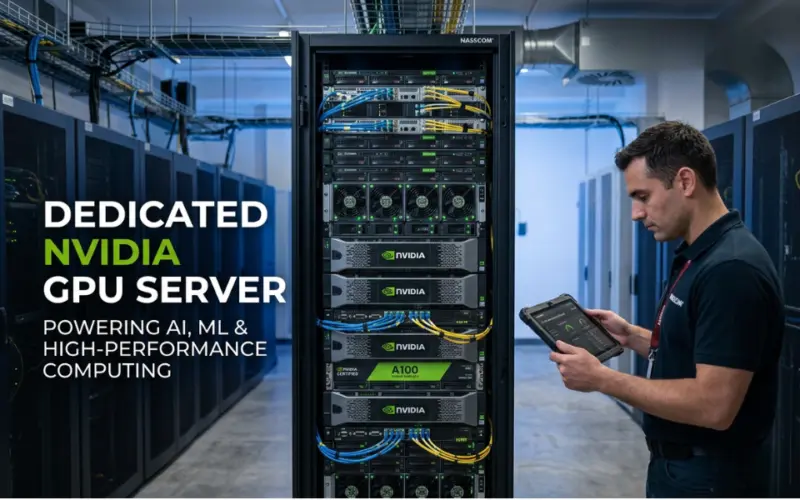

This shift has significantly increased demand for Dedicated NVIDIA GPU Server infrastructure, especially among teams struggling with performance limitations, unpredictable cloud costs, and scaling inefficiencies. A structured overview of deployment strategies and infrastructure design can be explored through this reference on Dedicated NVIDIA GPU Server.

In 2026, the challenge is no longer access to GPUs-it is how to use them efficiently at scale.

What 2026 Search Queries Reveal About GPU Infrastructure

Modern search intent has shifted toward operational challenges:

- "Why is my AI model training slow even with GPUs?"

- "Dedicated GPU vs cloud GPU cost comparison"

- "How to scale GPU workloads without latency issues?"

- "Why is GPU utilization low in AI workloads?"

These queries reflect a deeper issue: GPU infrastructure is powerful but often inefficiently used.

The Real Problems in Dedicated GPU Infrastructure (2026)

1. Extreme Cost Pressure and Resource Inefficiency

AI infrastructure costs have surged dramatically.

- global AI infrastructure spending is growing rapidly, reaching hundreds of billions of dollars

- GPU systems are often underutilized despite high investment

- inefficient workload scheduling leads to wasted compute

In many cases, companies invest in high-end GPU clusters but fail to optimize usage, leading to poor ROI.

2. Underutilization of GPU Resources

Even advanced systems fail to achieve full efficiency.

- GPU compute units often operate at 15-30% utilization in real workloads

- CPU bottlenecks and scheduling delays reduce performance

- improper workload distribution creates idle resources

This explains why simply adding more GPUs does not always improve performance.

3. Infrastructure Complexity and Scaling Challenges

Dedicated GPU environments require specialized architecture.

Key requirements include:

- high-bandwidth memory (HBM)

- multi-GPU interconnects like NVLink

- distributed storage systems

Traditional infrastructure is not designed for these needs, making scaling difficult.

4. Supply Constraints and Market Volatility

The GPU market in 2026 is highly unpredictable.

- demand for AI servers is expected to grow over 20% annually

- supply chain limitations and chip shortages impact availability

- pricing volatility makes long-term planning difficult

Organizations must plan infrastructure carefully to avoid delays and cost spikes.

5. Security and Operational Risks

As GPU infrastructure scales, risks increase.

- centralized GPU clusters become high-value attack targets

- proprietary architectures reduce transparency

- complex systems increase failure points

Security is now a critical consideration, not an afterthought.

Dedicated vs Cloud GPU: A Practical Comparison

1. Cloud GPU Infrastructure

Advantages

- on-demand scalability

- no upfront hardware investment

- faster deployment

Limitations

- unpredictable costs

- shared resource contention

- limited performance consistency

Cloud GPUs are ideal for short-term or experimental workloads.

2. Dedicated NVIDIA GPU Servers

Organizations adopting Dedicated NVIDIA GPU Server infrastructure typically prioritize performance and control.

Advantages

- consistent performance

- full resource control

- optimized for long-running workloads

Limitations

- higher upfront cost

- requires infrastructure expertise

- scaling requires planning

3. Hybrid GPU Strategy

A hybrid model is emerging as the preferred approach.

Advantages

- combine flexibility of cloud with performance of dedicated servers

- optimize cost and workload distribution

- improve resource utilization

This approach allows organizations to balance performance and cost effectively.

Key Technical Components of Modern GPU Infrastructure

🔹 High-Speed Interconnects

Technologies like NVLink enable:

- faster GPU-to-GPU communication

- efficient distributed training

- reduced latency

NVIDIA's ecosystem advantage is largely driven by these interconnect technologies.

🔹 CUDA Ecosystem and Software Stack

CUDA remains the dominant framework for GPU computing.

- deep integration with AI frameworks

- high switching costs for alternatives

- optimized performance for machine learning workloads

🔹 Storage and Data Pipeline Optimization

GPU performance depends heavily on data flow.

- slow storage creates bottlenecks

- high-throughput pipelines are essential

- data preprocessing impacts training speed

🔹 Observability and Performance Monitoring

Modern GPU systems require:

- utilization tracking

- workload profiling

- anomaly detection

Without observability, inefficiencies remain hidden.

Practical Implementation Strategy

To build an efficient GPU infrastructure:

- optimize workload scheduling and batching

- implement distributed training frameworks

- ensure high-speed storage and data pipelines

- monitor GPU utilization continuously

- balance workloads across CPU and GPU resources

At this stage, many organizations move toward structured environments like cloudminister, where infrastructure design, performance optimization, and resource utilization are aligned.

Future Trends in GPU Infrastructure (2026 and Beyond)

- shift toward AI-native data centers

- rise of custom AI chips alongside GPUs

- increased focus on energy efficiency

- integration of AI-driven infrastructure optimization

The industry is also moving toward platform-level competition, where hardware, software, and ecosystem integration define success-not just raw GPU performance.

Final Thoughts

Dedicated GPU infrastructure is becoming a foundational component of modern computing. However, the real challenge lies not in acquiring hardware but in designing systems that can fully utilize it.

By addressing issues such as cost inefficiency, underutilization, and architectural complexity, organizations can leverage Dedicated NVIDIA GPU Server environments to build scalable, high-performance AI systems that meet the demands of 2026 and beyond

artificial intelligence AI High Performance Computing GPU Computing

Disclaimer

This content is a community contribution. The views and data expressed are solely those of the author and do not reflect the official position or endorsement of nasscom.

That the contents of third-party articles/blogs published here on the website, and the interpretation of all information in the article/blogs such as data, maps, numbers, opinions etc. displayed in the article/blogs and views or the opinions expressed within the content are solely of the author's; and do not reflect the opinions and beliefs of NASSCOM or its affiliates in any manner. NASSCOM does not take any liability w.r.t. content in any manner and will not be liable in any manner whatsoever for any kind of liability arising out of any act, error or omission. The contents of third-party article/blogs published, are provided solely as convenience; and the presence of these articles/blogs should not, under any circumstances, be considered as an endorsement of the contents by NASSCOM in any manner; and if you chose to access these articles/blogs , you do so at your own risk.

Jaipur, Rajasthan, India

I'm Devansh Mankani, an SEO Executive at CloudMinister, an IT-based company providing reliable cloud and hosting solutions. I specialize in improving organic visibility, keyword rankings, and traffic through data-driven SEO strategies. CloudMinister offers services like cloud hosting, VPS, dedicated servers, managed hosting, and advanced infrastructure solutions. I work on promoting innovative services such as N8N Hosting for workflow automation and GPU server for AI workloads. My role focuses on aligning technical SEO with business goals to drive growth. I'm passionate about making complex IT services easily discoverable online. I continuously optimize content and performance to strengthen CloudMinister's digital presence.