Google AI chips TPU 8 are the company's latest attempt to push deeper into the market for high-end AI hardware, long dominated by Nvidia.

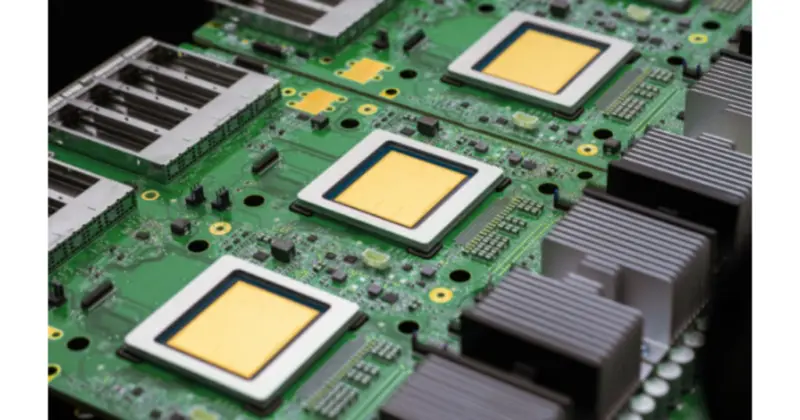

At its Cloud Next 2026 conference, Google unveiled two eighth-generation Tensor Processing Units, TPU 8t and TPU 8i, each tuned for a different part of the AI pipeline.

The Google AI chips TPU 8t and TPU 8i are designed to meet increasingly demanding AI workloads, from training huge models to serving them in real time. TPU 8t is pitched as a "training powerhouse", optimised for large-scale, compute-intensive model training and capable of cutting development cycles from months to weeks, according to Google.

Google says TPU 8t delivers up to 2.7 times better training price-performance than its previous Ironwood TPU generation, helped by higher compute throughput and improved interconnect bandwidth. The platform can scale to superpods of thousands of chips, offering as much as 121 exaflops of performance and around two petabytes of high-bandwidth memory in a single cluster.

The Google AI chips TPU 8 family also includes TPU 8i, which focuses on low-latency inference and "agentic" AI systems that need to reason and react quickly. Built with significantly more memory bandwidth and up to three times the on-chip SRAM of the previous generation, TPU 8i is aimed at keeping active model data on-chip to avoid bottlenecks.

Both TPU 8t and TPU 8i run on Google's new Axion ARM-based CPU host and rely on fourth-generation liquid cooling, a combination the company says improves performance while keeping energy use in check. Google claims up to 80 percent better inference performance-per-dollar for TPU 8i compared with its earlier TPUs, positioning the platform as a direct challenger to Nvidia's data-centre chips.

For cloud customers, the Google AI chips TPU 8 line promises faster training, cheaper inference and a more tightly integrated hardware-software stack that supports popular frameworks such as JAX and PyTorch. With TPU 8t and TPU 8i now at the heart of Google's data-centre strategy, the company is signalling that it intends not just to narrow the gap with Nvidia, but to reshape how large-scale AI systems are built and run.