Every developer working with AI knows this by now. Costs keep climbing. Latency becomes a problem you work around instead of solving. You send data to APIs and hope you didn't miss any fine print regarding about ownership.

Rate limits kick in at the worst possible time. Downtime happens when you're on a deadline. You're building on infrastructure you don't control, and it shows.

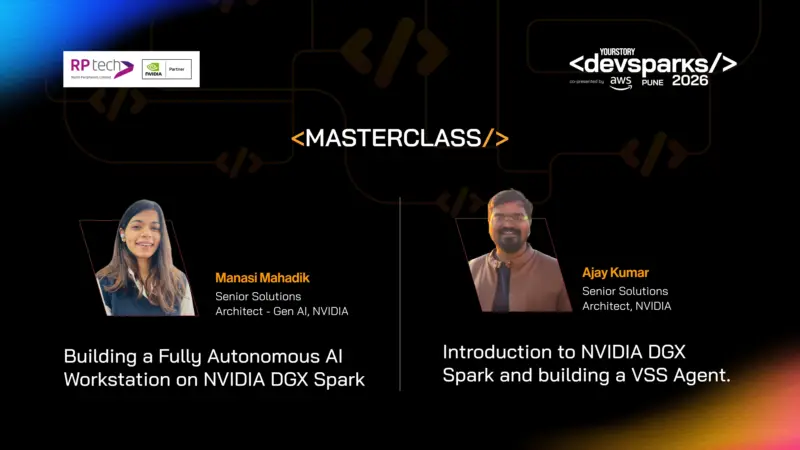

DevSparks Pune is addressing this head-on on February 28 at Oak, Hyatt Regency, with two masterclasses from NVIDIA. Both sessions are centered on a single idea: what if your AI workloads ran entirely on your hardware? No cloud dependency. No external APIs. Just local processing with data center-class performance sitting on your desk. RP Tech, an NVIDIA Partner, is enabling broader access to DGX Spark systems for developers and enterprises exploring local AI deployment.

The system making this possible is NVIDIA DGX Spark, powered by Blackwell architecture. It's compact enough for a workstation but built to handle the kind of AI workloads that usually require server racks. RP Tech's nationwide distribution network ensures that NVIDIA DGX Spark reaches developers and enterprises beyond traditional metro hubs, making high-performance AI hardware more accessible across India. At DevSparks Pune, two focused masterclass sessions will delve into the construction and deployment of these systems, offering valuable insights and practical knowledge for participants.

Masterclass: Introduction to NVIDIA DGX Spark and building a VSS Agent.

Ajay Kumar, Senior Solutions Architect at NVIDIA, will lead the first masterclass at 11.30 am. The session focuses on building a Video Search and Summarization agent, something that takes raw video footage and makes it searchable and queryable without touching external services.

Here's what makes it interesting. Vision language models and LLMs are working together to understand scenes, generate summaries, and answer natural language questions about video content. All this is happening locally. If you're working with surveillance footage, training videos, medical imaging, or any scenario where privacy matters, this approach changes the game completely.

Kumar will walk through the architecture, showing how VSS agents actually get built, and demonstrating what full data privacy at the edge looks like when you're not routing everything through cloud APIs.

Masterclass: Building a Fully Autonomous AI Workstation on NVIDIA DGX Spark

At 3.30 pm, Manasi Mahadik, Senior Solutions Architect for Gen AI at NVIDIA, will host a session on building fully autonomous AI workstations. Two live demonstrations, both designed around practical workflows that developers can immediately use.

First demo: a private knowledge base using RAG. Hosting massive document libraries locally, having secure conversations with your data, and getting instant retrieval without latency or usage costs. It's the kind of setup that makes sense for legal firms, research organizations, or any company dealing with proprietary information that can't leave their infrastructure.

Second demo: intelligent browser automation powered entirely by local models. ing AI navigate the web and execute complex workflows autonomously, without depending on remote inference. No API calls. No rate limits. Just models running on your hardware doing what you need them to do.

Both masterclasses assume basic Python familiarity and some understanding of large language models. They're designed for software developers curious about local AI deployment and engineers evaluating on-premise or edge solutions for privacy-sensitive applications.

Why this matters now

There's a shift happening. Cloud AI isn't going anywhere, but the assumption that everything has to run remotely is breaking down. For certain use cases-medical data, financial analysis, proprietary research, government applications-keeping AI workloads on-device isn't just preferable; it's mandatory.

NVIDIA DGX Spark represents where this is heading. Data center performance without the data center footprint. The ability to iterate locally without watching costs pile up or worrying about what's being logged somewhere you can't see.

The masterclasses run 60 minutes each, enough time to see the architecture, watch the demos, and understand how these systems actually get built.

If you've been routing everything through APIs because it seemed like the only option, or if you're evaluating what local AI deployment looks like for real-world applications, it's worth blocking your calendar. February 28. Two sessions. Two different approaches to the same problem. Both showing what's possible when you bring the compute to where the data lives.

To know more or buy DGX Spark, visit here

Sign up for free virtual sessions at GTC 2026.